Do you trust machines? I don’t know, I don’t think so. Yes, of course. Yes and No and so on. These are probably the most common answers to this question. Then, if you think about it, you start to realize that you trusted the machines more than you suspected. In post I am going to talk about RISKS associated to the utilization of machines and specifically about a new and increasing kind of them, risks that are coming from the Artificial Intelligence technology. Of course I will try to argument why it is now the time to think about them and how defining business and technical strategies to contrast and mitigate them is becoming one of the next competitive and innovation advantages for both AI producers and utilizers.

But let’s start to speak about risks defining the starting point for our description that is to quickly recall how relevant and pervasive Artificial Intelligence is becoming. We know very well that Artificial Intelligence is growing and its impact is not new to us. The general public I mean.

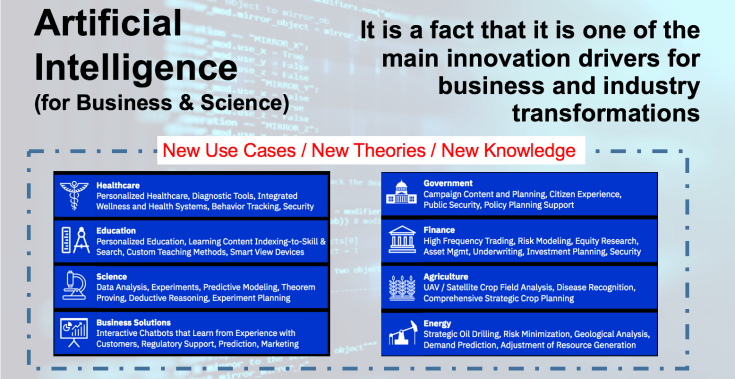

It is no more a niche phenomenon it is in everyday products as well as in future use cases.

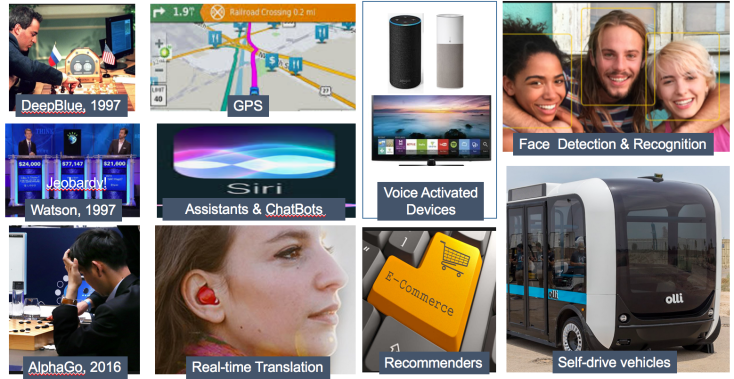

The figure below depicts a number of general and popular use cases for examples.

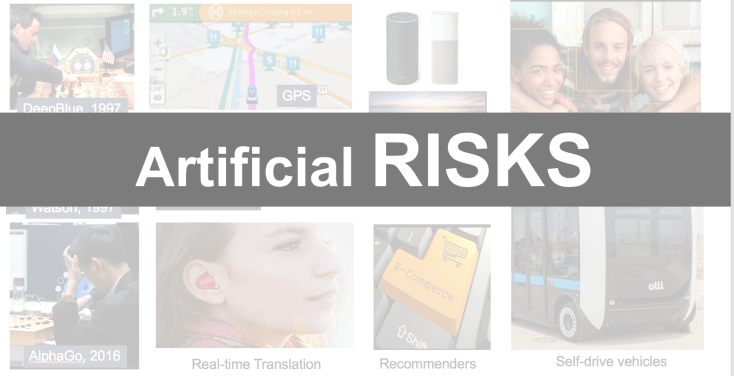

We started to know it when AI systems started to compete with humans in “intelligent” games like chess and more recently GO and the machines started to will.

But we know also that AI is at the back of a plenty of other systems including complex ones like self driving cars, translators (in the figure I reported a reference to a nice gadget and In-Ear translation device: https://www.boredpanda.com/real-time-translator-ear-waverly-labs/), recommenders systems for ecommerce and more, but also inside more frequently AI is in our cars and homes accessible through voice such as “assistant” microphones or simply our TVs. A simplified and very well know form of AI are the optimization algorithms that help us to find a directions, such as the GPS.

AI and games is a great and historic theme. Winning a game is a thing but solving industrial practical problems like to help a radiologist to interpret an MRI is another thing.

We are gamifying also this last like what BioMind, a Chinese start up company, did last year, when decided to organize a public competition between machines and radiologists in recognizing cancer in MRI (https://news.cgtn.com/news/3d3d414d776b444e78457a6333566d54/share_p.html)

The system won, but, I personally I do not like this kind of games especially when you read that only a small fragment of AI Health research papers followed a strong scientific validation process of their results.

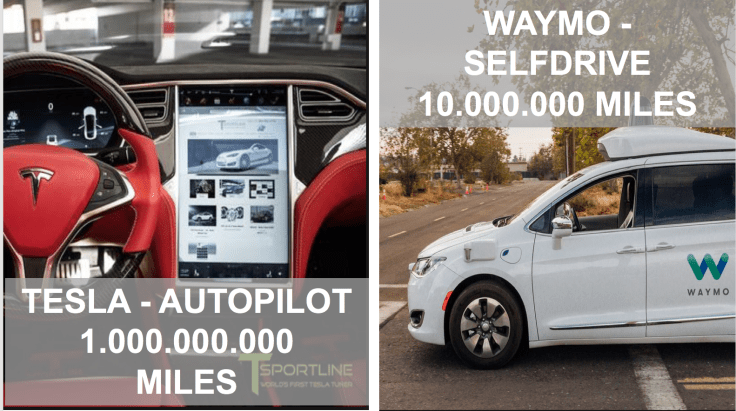

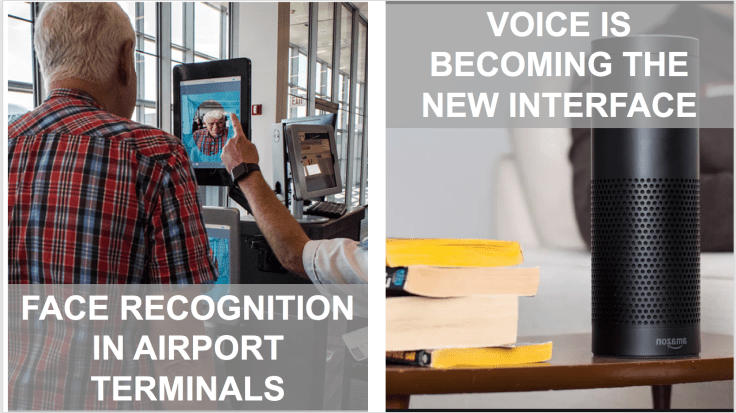

Changing the stage, you find plenty of other examples of AI systems such as Self driving cars and you are by sure impressed when you read that current self-drive cars travelled a lot see next figure show number by Tesla and Waymo. At the same time you find AI everywhere, helping us. Like in simplifying check-ins in Airports or leveraging voice recognition in our homes.

But the main question is How AI is right now perceived for business? Well it is a magic wizard!!

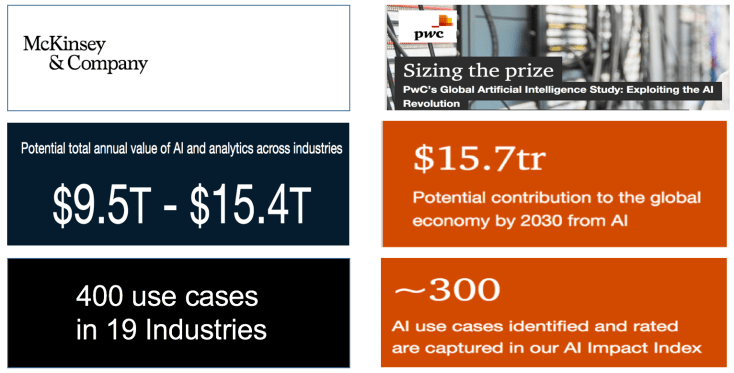

We know that it could have a great impact of our economy: optimistic views say that the potential of AI is like to generate an additional value of 15 Trillion of dollars in next decade or so (see works from McKinsey as well as PwC

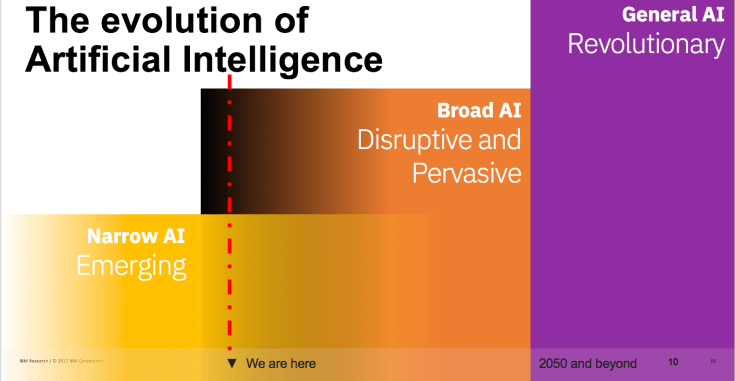

Today AI is “narrow,” meaning it is applied to particular tasks or domains. We are at the beginning of our journey! Some decades from now we envision a time when more autonomous compute capabilities will enable more ”General AI.”

In between, we see broader use of AI – capabilities that can more easily be scaled from task to task, from domain to domain, solving more complex problems, applied to both consumer and enterprise applications. AI will become pervasive across industries, assisting decision-making and enabling brand new opportunities for differentiation and value. So we are just at the beginning folks!

So, now let’s change the perspective and replace the word INTELLIGENCE with the world RISKS, what’s happen? We have Artificial RISKS.

So the question now is: Do we have risks in using AI technology?

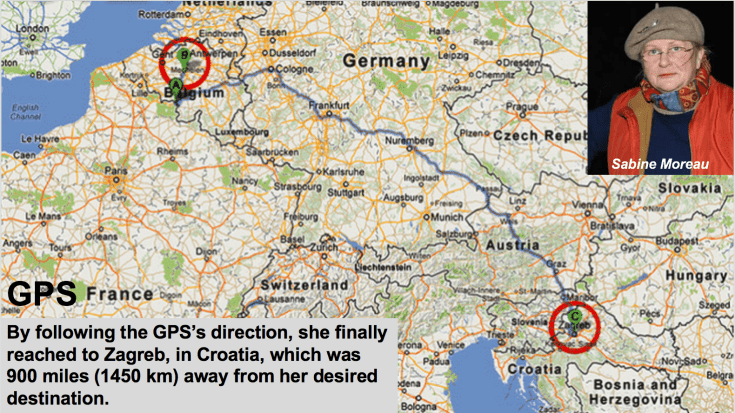

As in the case of this lady, Sabine, that was travelling from her home to Brussel about 50 km and she found herself several hours later in Zagreb Croatia. Sabine trusted her GPS system at an extreme point.

Do you find yourself in a similar scenario? Do you trust your GPS?

Let’s consider now another theme: face recognition. You know in Italy we have along highways speed checker systems like the one in the figure. Here we call it Tutor. Like a Tutor that is coaching Italians to drive better!

Usually, there are plenty of signals that indicate its presence before the speed checker and about the fact that you will be monitored.

A similar thing of what you see on the right is a signal that warns citizens that along the roads in London your face will be checked by the police in an experimental setting (see the figure). But it is interesting to see also that first tickets are issued by police to citizens that are covering their faces to not be monitored.

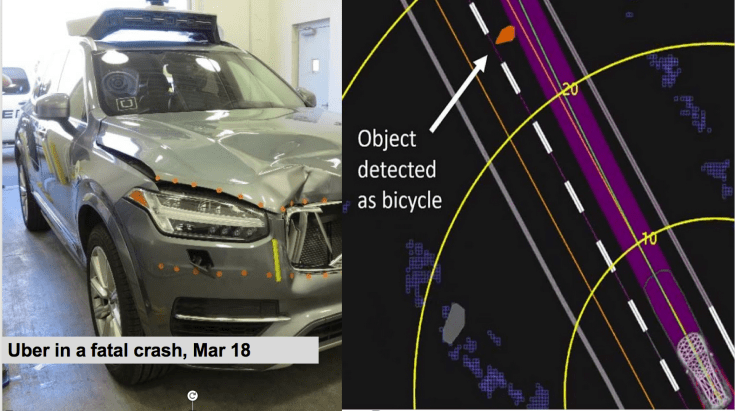

Self-drive cars… are ok! Their experimentations are going quite fine … but… first accidents unfortunately are happening. Like the one involved an UBER car last year. In this specific case the AI system was able to check the presence of an obstacle several seconds before the accident but it was “disabled” by the driver for some reasons… so problems could be in the AI utilizers as well and not only on the technology side (https://techcrunch.com/2018/05/24/uber-in-fatal-crash-detected-pedestrian-but-had-emergency-braking-disabled/)

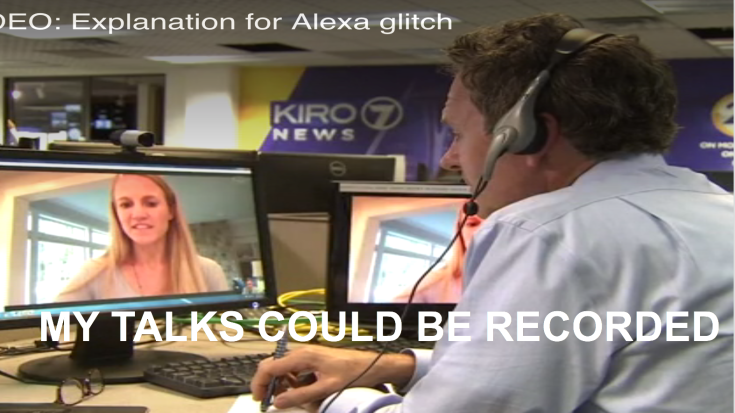

Another area to find potential risks is in systems that analyze speech. Our voices are recorded for some purposes on cloud or without purposes. As a case to mention, is the story told by the lady in this picture that claimed that Amazon Alexa accidentally recoded her voice and the voice of her husband during a personal discussion among the two and send the record to a friend of them that was in their address book. This was apparently a bug of the system that for some reason activated itself and send the record to someone else.

Another nice example of how technology is entering in our intimacy is the one represented into the next figure. Basically the lady here had a sort of free and selfie echography before her gynecologist and discovered that she was pregnant (https://www.texasrighttolife.com/amusement-park-thermal-camera-gives-one-lucky-family-a-sneak-peak-of-their-preborn-baby/)

Final example: who is real the girl on the left or the one on the right? You can enjoy and try other examples at this site: http://www.whichfaceisreal.com/index.php

After trying few times you will learn that to distinguish the real from the fake you have to concentrate not on the person represented but on the background!

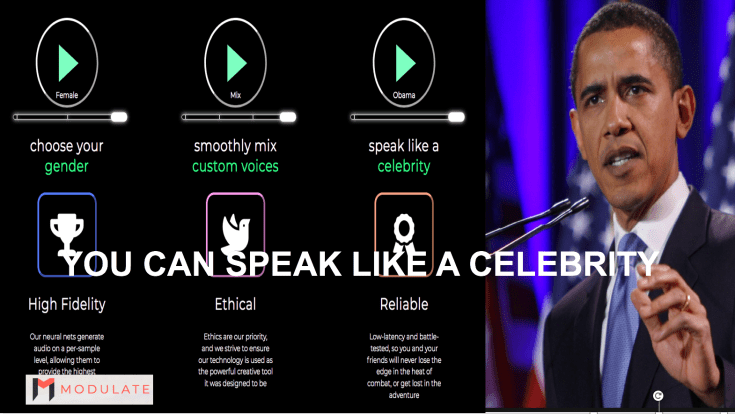

Do you what to speak like a OBAMA? It is enough to record 2’ of a voice of your target and after this MIT students startup can modulate your voice to appear like the target (https://modulate.ai/)

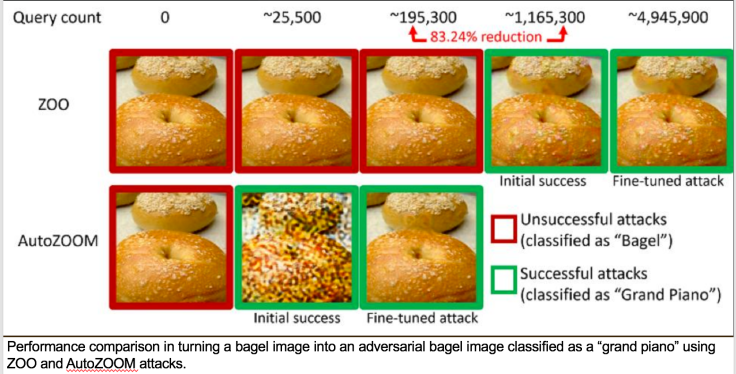

Last example: is in the next picture represented a bagel or a grand Piano? A Tiny change even in a pixel into an image can fool the image recognition completely. It is interesting that into the slide are represented also the results of two counterattack algorithms to prevent this attacks like ZOO; and AutoZoom that are still not able to prevent all kind of attacks.

So last the chapter… why do we need to think and reason about to make AI more trustful now??

From this point of view we are like at the beginning of last century. I like old pictures like the ones into the figure.

All images represent cities during the emerging car industry. Beautiful roads, squares and so on packed of cars and pedestrians moving in all directions with no presence or very few street signals around. Well, the AI trustworthiness is about to need to create traffic rules!!!

Why?? We need to cope with our limits, human limits I mean of course first of all. We know very well from phycologists how many biases we have. We need also to manage a plenty of new territories like bias into data, into the AI models or how we built such AI systems.

AI ethics and how to make AI systems more robust is becoming de facto a new marketplace. During last two years a number of international public or private tables produced recommendations and guidelines in this area. his is just the beginning.

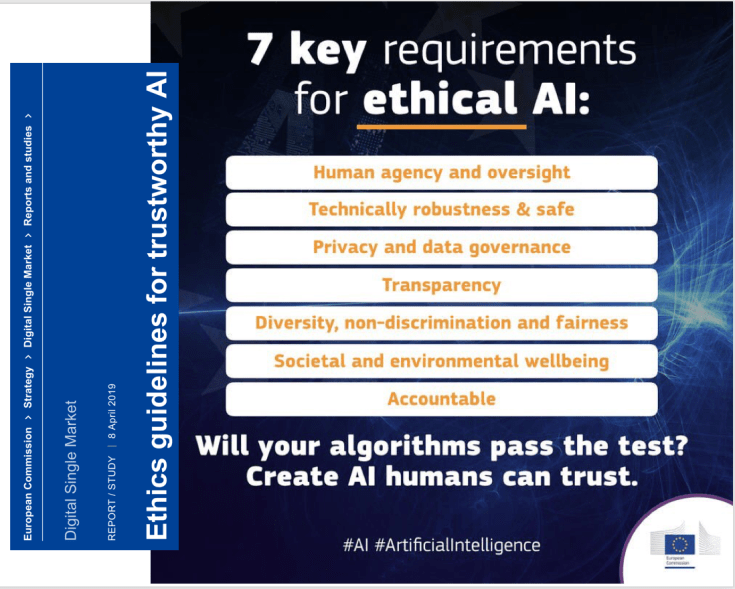

The most recent and the most advanced framework is the one just published by European Union: the:The Ethics guidelines for trustworthy AI. The figure reports the principles and the 7 requirements of the EU report.

In summary, all of this in my opinion is not only important but also it is a foundational part for our life and for our businesses. Knowing how to make our systems trustful is becoming a main stream and an Innovation Differentiator in medium and long terms for all king of companies.

Leave a comment